Archive in Gmail: What it Means and How to Use It

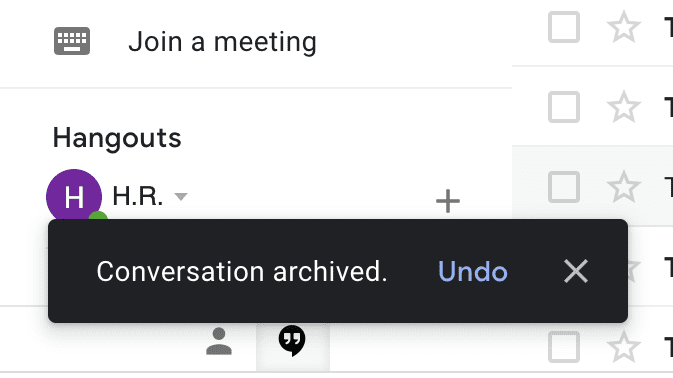

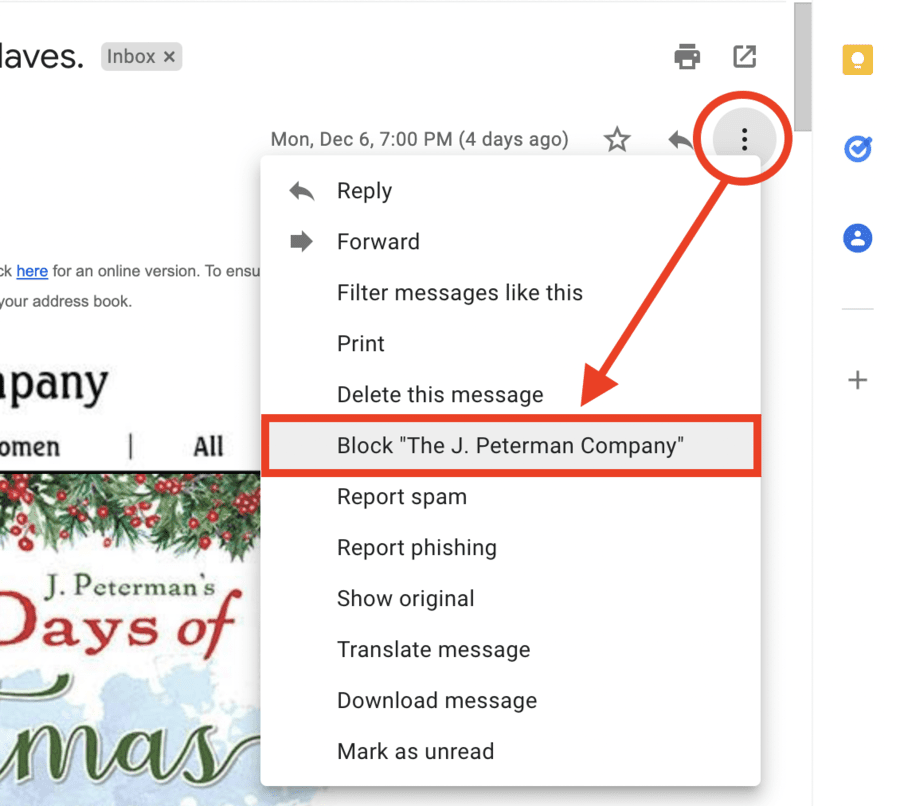

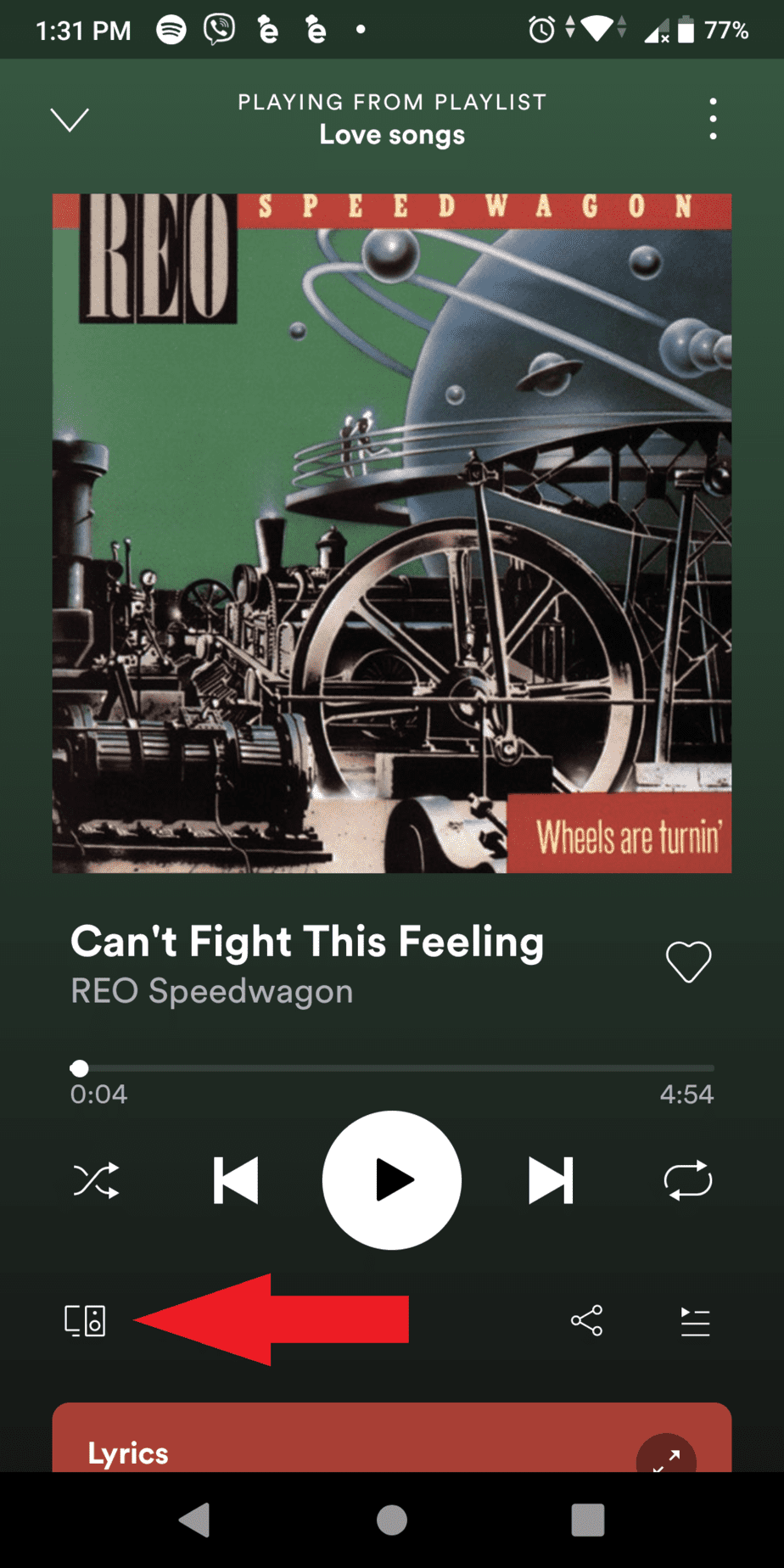

Archiving messages in Gmail can seem like a straightforward way to keep your Gmail account clean. However, because the archive function doesn’t work exactly like others, it can be a little confusing – especially when you archive an email and discover you can no longer find it. Read this guide to learn how, when, and …